Introduction

What is KServe?

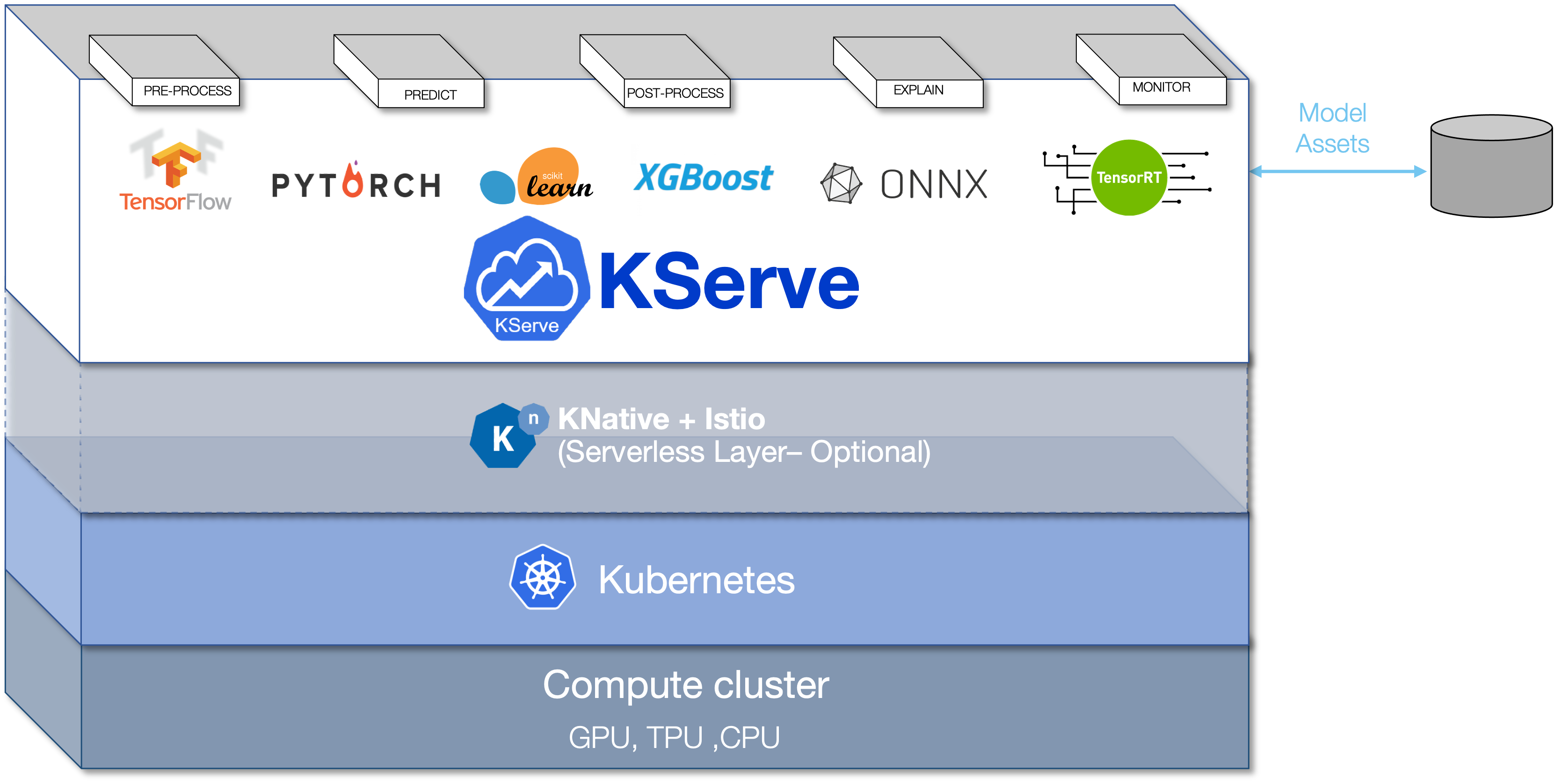

KServe enables serverless inferencing on Kubernetes and provides performant, high abstraction interfaces for common machine learning (ML) frameworks like TensorFlow, XGBoost, scikit-learn, PyTorch, and ONNX to solve production model serving use cases.

You can use KServe to do the following:

- Provide a Kubernetes Custom Resource Definition for serving ML models on arbitrary frameworks.

- Encapsulate the complexity of autoscaling, networking, health checking, and server configuration to bring cutting edge serving features like GPU autoscaling, scale to zero, and canary rollouts to your ML deployments.

- Enable a simple, pluggable, and complete story for your production ML inference server by providing prediction, pre-processing, post-processing and explainability out of the box.

KFServing is now KServe

KFServing was renamed to KServe in September 2021.

The KFServing GitHub repository has been transferred to an independent KServe GitHub organization under the stewardship of the Kubeflow Serving Working Group leads.

For information about migrating from KFServing to KServe, see the KServe migration guide.

Architecture

Installation

Install from Kubeflow

Kubeflow provides Kustomize installation files in the kubeflow/manifests repo with each Kubeflow release.

However, these files may not be up to date with the latest KServe release.

You may also review the examples of running KServe on Istio & Dex from the KServe/KServe repository, as this is related to the Kubeflow installation.

Install from KServe

You can install KServe without Kubeflow by following the KServe getting started guide.

Resources

Further reading

- Kubeflow 101: What is KFserving?

- KFServing 101 slides.

- Kubecon Introducing KFServing.

- Serving Machine Learning Models at Scale Using KServe - Yuzhui Liu, Bloomberg

- KServe (Kubeflow KFServing) Live Coding Session

- TFiR: Let’s Talk About IBM’s ModelMesh, KServe And Other Open Source AI/ML Technologies | Animesh Singh |

- KubeCon 2021: Serving Machine Learning Models at Scale Using KServe | Animesh Singh |

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.